So, I had to improvise a little bit in my Science Fiction Genre class the other day because a guest lecturer I had lined up was unable to join us. So I wound up showing a couple of anime from the Animatrix, the direct-to-DVD release that adds some of the back story to the two sequels to the Matrix. And I showed the class the first regular episode of the SyFy Channel's Battlestar Galactica series, which is entitled "33" and I think was the best episode in the entire run.

The original series from 1978 was clearly derivative of the first Star Wars film from the previous year, and an attempt to capture some of that sparkle for TV. It only lasted one season, followed by a short-lived attempt to revive it in 1980. While the original series has had its fans, simply put, it was not very good. Not horrible mind you, just not very good.

The scenario was taken from the Book of Exodus, with Lorne Greene, well known to audiences then as the patriarch of the Cartwright clan on the long running western series Bonanza, leading a ragtag fleet of spaceships on a quest to find the mythical planet Earth. The fleet was all that was left of humanity, after an attack by the evil Cylon robot empire destroyed the 12 colonies, 12 worlds named after the 12 signs of the zodiac.

The original had an underlying religious sensibility, and in Battlestar Galactica 1980 there even was some interaction with angels, but the relationships were never clear.

Unlike the original, the remake was a high quality production, with a great deal of originality, and it very much captured a post-9/11 sensibility, really emphasizing the fact that humanity was almost entirely exterminated, and that the survivors were just barely hanging on. The 12 colonies were again associated with the 12 signs of the zodiac, and consistent with this, human culture was depicted as polytheistic—they would say gods where we would say God—and specifically rooted in the ancient Greek pantheon (e.g., Zeus, Apollo, Athena, etc.).

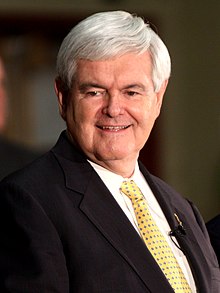

Interestingly enough, there's also a Mormon reference thrown in, a carry-over from the original series, whose creator, Glen Larson, was Mormon. Both series make reference to Kobol as the home of the human race, and Kolob in the Mormon faith is the "is the star or planet nearest to the throne of God" (see the Wikipedia entry on Religious and mythological references in Battlestar Galactica). What impact this may have on the candidacy of Mitt Romney, it is hard to say (a number of people have speculated on the possibility that Romney is a robot, and my friend Paul Levinson thinks he might even be a Cyclon!). Perhaps if he chooses Newt Gingrich as a running mate, Newt having made a moon base one of his campaign promises, he'd have the science fiction vote sewed up.

Sound far-fetched? You may be surprised to learn that Reagan gained favor in the SF community due to his "Star Wars" Strategic Defense Initiative. Seems that many SF fans saw the militarizing of space as worthwhile in that it was a surefire way to get us up into space, and keep us there. Those damn peaceniks are all about feeding the hungry, curing diseases, eradicating poverty, and the like. Lewis Mumford saw quite clearly that the space program was as massive a waste of labor and resources as the building of the pyramids in the ancient world, noting that both constitute attempts to send a select few into their respective notions of the heavens. Rationally, I know he's right, although it's hard to shed that emotional attachment to the vision of space travel a la Star Trek.

Sound far-fetched? You may be surprised to learn that Reagan gained favor in the SF community due to his "Star Wars" Strategic Defense Initiative. Seems that many SF fans saw the militarizing of space as worthwhile in that it was a surefire way to get us up into space, and keep us there. Those damn peaceniks are all about feeding the hungry, curing diseases, eradicating poverty, and the like. Lewis Mumford saw quite clearly that the space program was as massive a waste of labor and resources as the building of the pyramids in the ancient world, noting that both constitute attempts to send a select few into their respective notions of the heavens. Rationally, I know he's right, although it's hard to shed that emotional attachment to the vision of space travel a la Star Trek.

Anyway, the humans in Battlestar Galactica were essentially as modern as us in most ways, and more so in regard to space travel, and the fact that their religion was based on the Greek gods can be seen as reflecting and symbolizing the fact that western culture is dominated by a secular humanist orientation that is rooted in Greek philosophy. By way of contrast, the Cylons believed in God--they were monotheists, and generally much more religiously oriented than the humans. And this being a post-9/11 series, this twist clearly reflects our anxiety about Moslems generally, and Islamic fundamentalism and the terrorist initiative that sprung from it more specifically.

I should note that back when the new series was on the air, I wrote several posts about it, and you can find them by clicking on Battlestar Galactica over on the side, down at the Labels gadget, where all of the labels are displayed in cloud formation. But I'm bringing all this up because I wanted to note the theme music for the series, which has been widely applauded for its aesthetic appeal, has some middle eastern overtones to it. It also turns out that some elements of the theme come from a bit further to the east. There's a bit of singing in an unfamiliar (hence alien in a sense) language, and over on YouTube the person who posted this video, pastafarian69, noted the following:

Turns out, the singing in BSG's opening is actual lyrics, taken from the Gayatri Mantra: oṃ bhūr bhuvaḥ svaḥ tát savitúr váreniyaṃ bhárgo devásya dhīmahi dhíyo yó naḥ pracodáyāt

The Language is Sanskrit. Basically, it translates into something like: We meditate upon the radiant Divine Light of that adorable Sun of Spiritual Consciousness; May it awaken our intuitional consciousness.

He then added the lyrics to the video, as you can see below:

So, now, the music for Battlestar Galactica was create by Bear McCreary, who has also done music for the sequel series, Caprica (a major disappointment, the series that is, not the music), The Walking Dead (one of my favorites of current series), Eureka (a series that never lived up to its promise, and one that I gave up on), and Terminator: The Sarah Connor Chronicles (a great series that inexplicably never caught on and was canceled). If you're interested in his work, go check out the Bear McCreary Official Site.

And this brings me finally to the point of this post, which is to share with you a short video, one that is, appropriately enough for Blog Time Passing, entitled Temporal Distortion, and which features a soundtrack by, you guessed it, Bear McCreary. So, here goes:

Temporal Distortion from Randy Halverson on Vimeo.

And, according to the write up over on Vimeo:

What you see is real, but you can't see it this way with the naked eye. It is the result of thousands of 20-30 second exposures, edited together to produce the timelapse. This allows you to see the Milky Way, Aurora and other Phenonmena, in a way you wouldn't normally see them.

In the opening "Dakotalapse" title shot, you see bands of red and green moving across the sky. After asking several Astronomers, they are possible noctilucent clouds, airglow or faint Aurora. I never got a definite answer to what it is. You can also see the red and green bands in other shots.

At :53 and 2:17 seconds into the video you see a Meteor with a Persistent Train. Which is ionizing gases, which lasted over a half hour in the cameras frame. Phil Plait wrote an article about the phenomena here blogs.discovermagazine.com/badastronomy/2011/10/02/a-meteors-lingering-tale/

There is a second Meteor with a much shorter persistent train at 2:51 in the video. This one wasn't backlit by the moon like the first, and moves out of the frame quickly.

The Aurora were shot in central South Dakota in September 2011 and near Madison, Wisconsin on October 25, 2011.

Watch for two Deer at 1:27

Most of the video was shot near the White River in central South Dakota during September and October 2011, there are other shots from Arches National Park in Utah, and Canyon of the Ancients area of Colorado during June 2011.

Is this video science fiction? Not exactly, not in a purist sense, but it does use science to create an alien and alienating, and in a sense fictional sense of space and time. Perhaps we can speak, in an abstract sense, of a science fiction aesthetic that exists independently of any science fiction scenario? Or perhaps we need to create an entirely new category for this sort of experimental video?